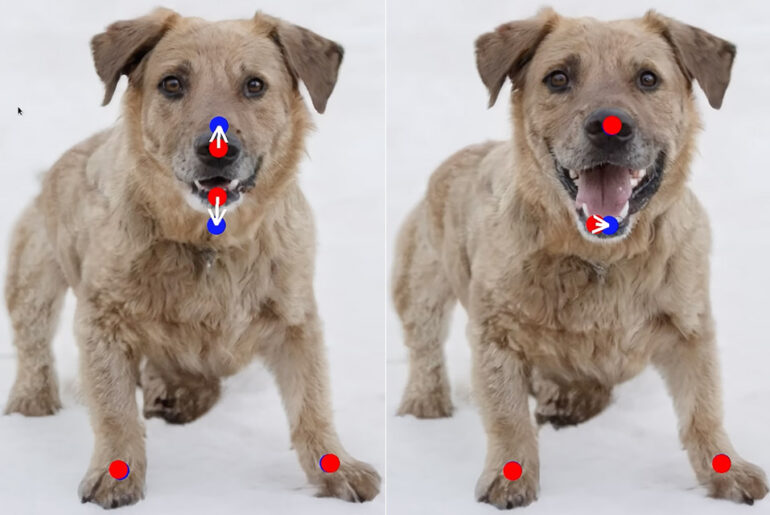

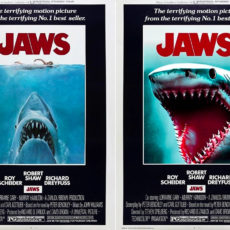

Typical generative adversarial networks (GANs) tools rely on manual training data or previous 3D models, but DragGAN is a new kind of artificial intelligence tool that lets users manipulate images by simply clicking and dragging. It was developed by researchers from Google, the Max Planck Institute of Informatics as well as MIT CSAIL and basically leverages a pre-trained GAN to synthesize images that not only precisely follow user input, while remaining realistic.

This approach does not rely on domain-specific modeling or auxiliary networks, as it utilizes an optimization of latent codes that incrementally moves multiple handle points towards their target locations as well as a point tracking procedure to accurately trace the trajectory of them. What they ended up with was a point-based tracking technique far superior to other point-based tracking strategies.

- Experience total immersion with 3D positional audio, hand tracking and easy-to-use controllers working together to make virtual worlds feel real.

- Explore an expanding universe of over 500 titles across gaming, fitness, social/multiplayer and entertainment, including exclusive releases and...

- Enjoy fast, smooth gameplay and immersive graphics as high-speed action unfolds around you with a fast processor and immersive graphics.

Both qualitative and quantitative comparisons demonstrate the advantage of DragGAN over prior approaches in the tasks of image manipulation and point tracking. We also showcase the manipulation of real images through GAN inversion,” said the researchers.