In a minimalist Tokyo lab, a woman lounges in a chair that could easily pass for something in a fancy spa. But she’s actually controlling a humanoid robot across the room with just the tiniest flexes of her muscles. The robot copies her every move, from lifting a box to wiping down a table to shaking someone’s hand. This is H2L’s Capsule Interface.

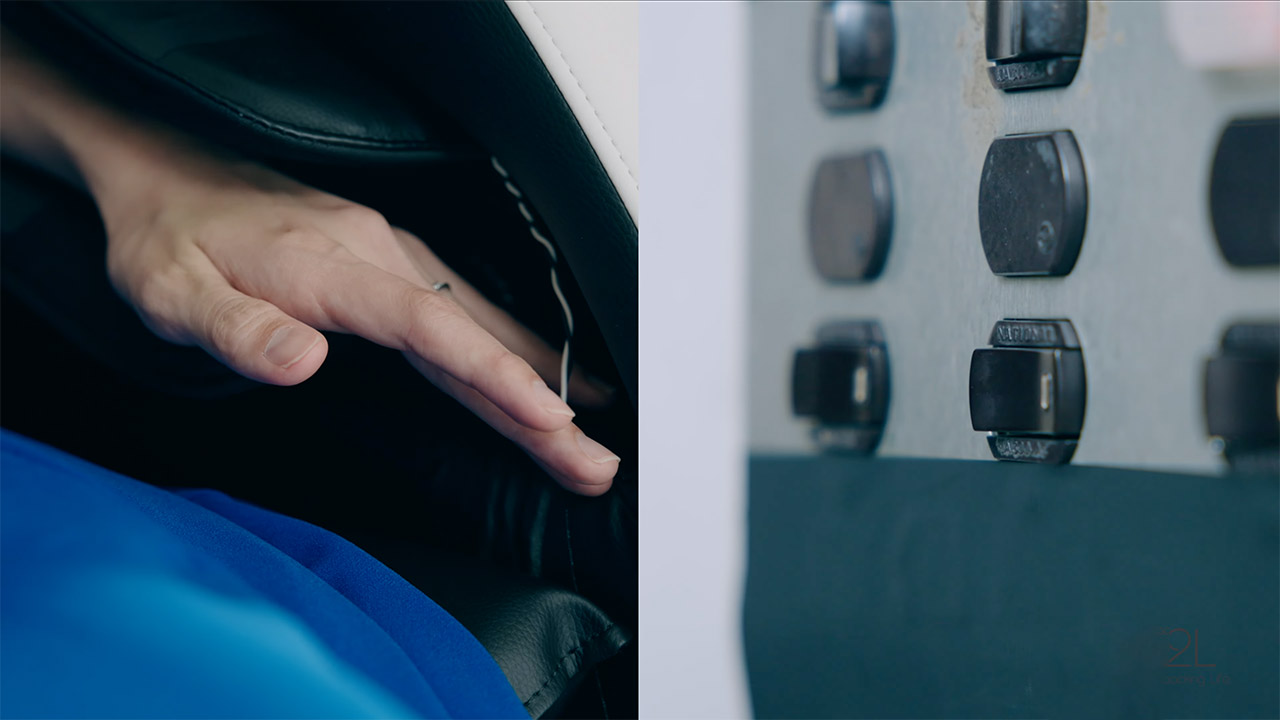

H2L’s Capsule Interface is like a direct line from your brain to a robot’s actions. Forget clunky joysticks, gloves, or bulky exoskeletons—this setup uses muscle sensors embedded in a comfy chair or bed to pick up the smallest shifts in your movements. Those signals get sent straight to a humanoid robot, like the Unitree Robotics H1 in H2L’s demos, making it move with the same vibe and force as you. “Our muscle-focused system lets robots mirror not just what you do but how hard you’re doing it,” H2L shared in a press release.

- Transform your reality and do everything you love in totally new ways. Welcome to Meta Quest 3S. Now you can get the Batman: Arkham Shadow* and a...

- Explore thousands of unreal experiences with mixed reality, where you can blend digital objects into the room around you or dial up the immersion in...

- Have more fun with friends in Quest. Whether you’re stepping into an immersive game with people from around the world, watching a live concert...

This tech sidesteps the usual limits of motion-capture gear, like those inertial trackers or optical systems that only care about where your arms are and miss the feeling behind your moves. H2L’s sensors catch the subtle changes in muscle tension—like the difference between a solid handshake and a gentle wave. In a company video, a woman casually steers the H1 robot through tasks like cleaning or lifting, all while chilling in her chair.

The possibilities are wild. In the workplace, this could mean showing up to meetings or tackling tasks through a robot, no plane ticket needed. Delivery folks could operate robots from a hub, saving their backs while still moving heavy stuff. Farmers could use it to plant or check crops from miles away, sharing their know-how across fields. In healthcare, nurses or doctors could help patients remotely, and for entertainment, imagine diving into virtual worlds where you feel your avatar’s moves. H2L’s dreaming of adding feedback that mimics the robot’s actions, making it seem like you’re right there in its shoes.

The muscle sensors are crazy precise, picking up tension and movement like top-tier medical gear. That data gets crunched by algorithms that don’t just track your limbs but figure out how much effort you’re putting in. Unlike visual trackers that get thrown off in messy spaces or inertial units that lose accuracy over time, H2L’s system stays sharp by tapping into your body’s signals. The result? A robot like the H1 that glides through a room, grabs stuff, and chats with folks without that stiff, clunky feel of older setups.